Out-of-state college attendance by Illinois residents is a problem. Historically, a strong economy, large population, and functional government shielded Illinois from the negative impacts of migration. That is no longer the case. Click here to see a visualization of the impact.

The Magnitude

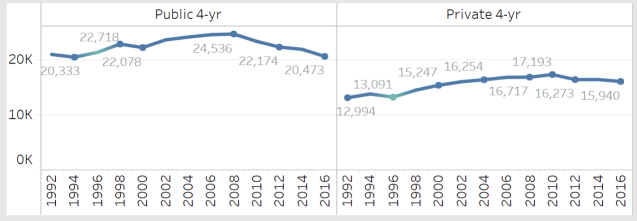

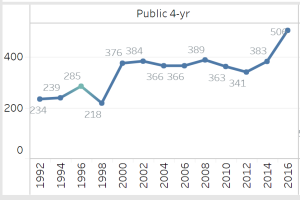

Figure 1 shows the enrollment of first-year students from Illinois at Illinois 4-year colleges in the last 24 years. 4-year public enrollment hasn’t changed at all. It’s decreased by almost 20% alone in the last 10 years.

Figure 1. First-year Student Enrollment of Illinois Residents at Illinois 4-year Colleges, 1992-2016. See this visualization for more information. Overall enrollment in Illinois colleges (including Illinois and out-of-state residents) has fallen by about 16,000 students in the last seven years alone.

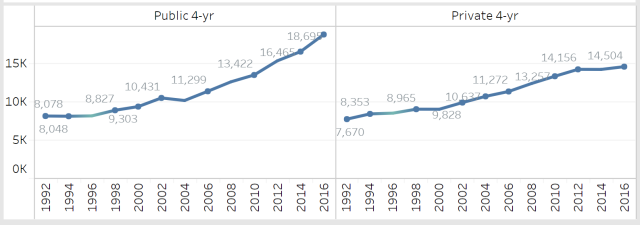

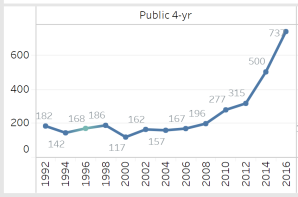

Figure 2 displays the enrollment of first-year students from Illinois at 4-year colleges in other states. While the enrollment of Illinois residents in Illinois colleges has been flat, out-of-state enrollment has nearly doubled.

Figure 2. First-year Student Enrollment of Illinois Residents at Out-of-State 4-year Colleges, 1992-2016. See this visualization for more information.

Is Out of State Enrollment Really a Problem?

Think of Illinois taxpayers as investors in people. Businesses invest in equipment, space, productivity and – especially – people. They expect returns on these investments.

Illinois taxpayers invest in the future of the state through education and training. The hope is that investments in people through education and training see positive returns.

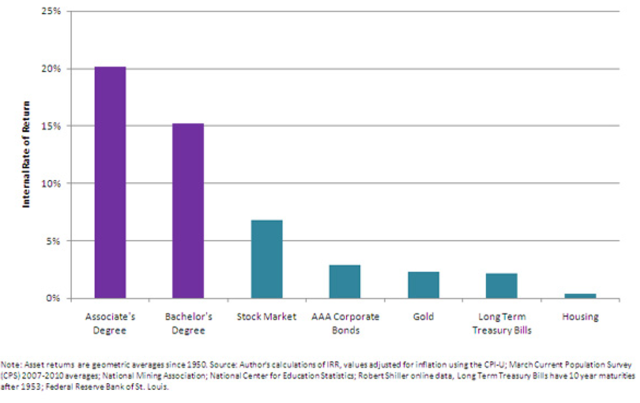

The evidence is clear that investments in people through education and training produce positive returns to state taxpayers. In fact, investments in people produce higher rates of return than pretty much any other kind of investment. Including the stock market, gold, and real estate.

Figure 3. Rate of Return on a College Degree Compared to Other Investments. Source: Thompson, D. (2012, March 27). What’s More Expensive Than College? Not Going to College. The Atlantic.

Costs

Illinois taxpayers invest about $12,000 annually for each K-12 student. That amounts to $156,000 for each student over the course of a K-12 student’s lifetime. That’s an investment of billions of dollars in the future of the state.

Returns

As figure 3 showed, investments in people witness high returns. Despite news stories and bloggers who write about increasing tuition and student loan debt, the returns from education and training are still significantly higher than pretty much any other investment one can make.

For Illinois, these returns mean a larger tax base, lessening the tax responsibility and costs for all citizens. They also mean additional investments in the quality of life of the state’s citizens through better roads, schools, parks, and other amenities.*

*This blog focuses mostly on the economic returns of education. It should be acknowledged that higher education produces non-economic returns that also make positive contributions to a region’s quality of life.

What About People Who Move Back?

Unfortunately, this is not the case in Illinois. A study by Eric Lichtenberger and Cecile Dietrich found that although many out-of-state college students return to Illinois after college graduation, the employment rate in Illinois is substantially lower than for those who stay in Illinois for college. This is consistent with migration research. Once a person moves to a new region, they are much more likely to move again and less likely to return to return to their native state.

There’s another problem with out-migration. Lichtenberger and Dietrich also noted that Illinois students who attend out-of-state colleges and universities are much more likely to come from upper-income families and enroll in high-demand fields in science, research and health.

Economists call this phenomenon brain drain. History of full of examples of countries and states that have taken advantage of brain drain. Many of the technological advances made by the U.S. in the 1950’s and 1960’s, for example, came from Jewish scientists who fled Germany to the U.S. during the second World War.

How Other States Win from Illinois’ Neglect of Higher Education

Other states have caught on to the idea that Illinois residents don’t feel there are enough options for them in Illinois, that the state underfunds and has no coherent plan for higher education, and the perception that out-of-state tuition is actually lower than in-state tuition.

20% of all first-year students at Mizzou are now from Illinois, compared to about 5% in 1996. In 2012, the Chicago Tribune picked up on this and published an article titled The University of Chicagoland at Missouri.

Students are not only being sold the college, however. Out-of-state colleges sell their state as a good place to live and work after graduation. Judging by high migration rates for all Illinois citizens, the recruitment. While out-of-state enrollment in border states like Missouri and Iowa has always been strong, out-of-state enrollment in far-off states like Alabama and Colorado have increased dramatically in the last 10 years.

Figure 4. First-year Student Enrollment of Illinois Residents at Colorado 4-year Public Colleges, 1992-2016. See this visualization for more information.

Figure 5. First-year Student Enrollment of Illinois Residents at Alabama 4-year Public Colleges, 1992-2016. See this visualization for more information.

Other states’ taxpayers win by gaining all the returns with none of the upfront costs in K-12 education. It’s like inheriting a constant stream of income from a trust fund in which you invested zero dollars.

Proposed Solutions

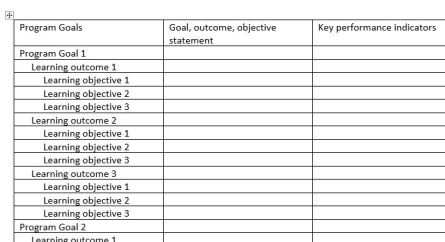

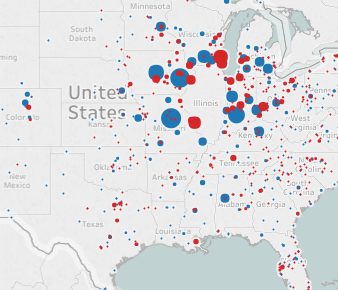

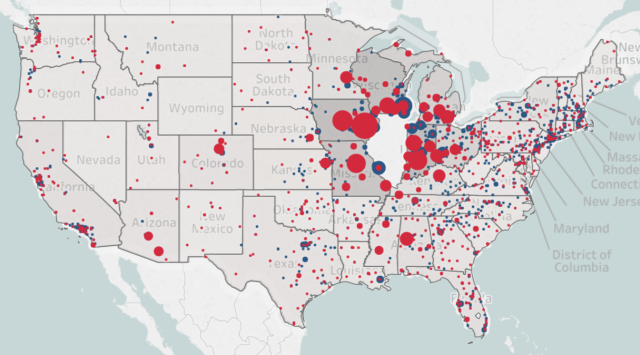

Figure 6. First-year Student Enrollment of Illinois Residents at Out-of-State 4-year Colleges, Fall 2016. Public colleges are in red, private in blue. See this visualization for more information.

Mission Differentiation

The largest red dots on the map above represent the Universities of Iowa, Iowa State, Missouri, Wisconsin, Michigan, Indiana, Minnesota, Purdue, Ohio State and Kentucky. They are essentially out of state versions of the University of Illinois.

Many states have differentiated systems, with large universities focused on research, public liberal arts focused on teaching, or institutions focused on science and technology. They do this because it fits with state economic and social priorities. And it gives residents many options, keeping them in state.

As stated in A Decade of Decline, Illinois has an inability to establish shared state goals and priorities for higher education and fails to allocate resources strategically, if they are even allocated at all. A dysfunctional political culture and a lack of will among residents to make public investments in higher education has had a significant negative impact on higher education in Illinois. Despite its unlikelihood, the mission differentiation approach is probably the best solution.

Bringing College Graduates Back (and Stealing College Graduates from other States)

This approach accepts the idea that there is no political will to adequately fund higher education in Illinois, to ensure adequate planning, or good governance. It dedicates resources and energy to agressively recruit college graduates from other states.

Economically, this might make sense. There is little to no investment by Illinois residents, Yet, the state witnesses all the returns.

There are significant challenges to this approach, however. Illinois is hemmoraging residents. Illinois was ill-prepared for the transition from a manufacturing/goods-producing to a knowledge/service-based economy (the exception being Chicago). Today’s knowledge-workers are not only looking for quality jobs, but also a quality of life. Infastructure, business incubation, creating environments where people can share knowlege across industries and sectors, and public amenities should be viewed as investments, not public costs. Unforunately, it’s not clear that Illinois is doing much to attract a vibrant workforce looking for jobs relevant in today’s economy.

Merit Aid

A broad, merit aid approach will likely have minimal impact on keeping students in state. College students migrants are not making decisions based on cost – they are making them based on perceived institutional quality and the desire for a specific college experience. They generally come from high-income families and can easily afford the out-of-state costs.

When a majority of Illinois students leave Illinois to attend large, research universities with prominent athletic programs – and there’s only one university in the state that offers that experience – those residents are sending a message. As a contrast, the population of Iowa and Kansas (about 3 million each) are a quarter of Illinois (12 million). Yet, they are able to sustain two large, public universities.

A targeted merit aid approach focused on high-demand fields, like science or technology, could potentially work. But, the campus programs have to exist. And they have to be mature and adquately-funded. A 50-year I.T. or chemistry program at Purdue or Mizzou, for example, has a substantial head start. And there’s the risk that gradudates could still leave the state after graduation.